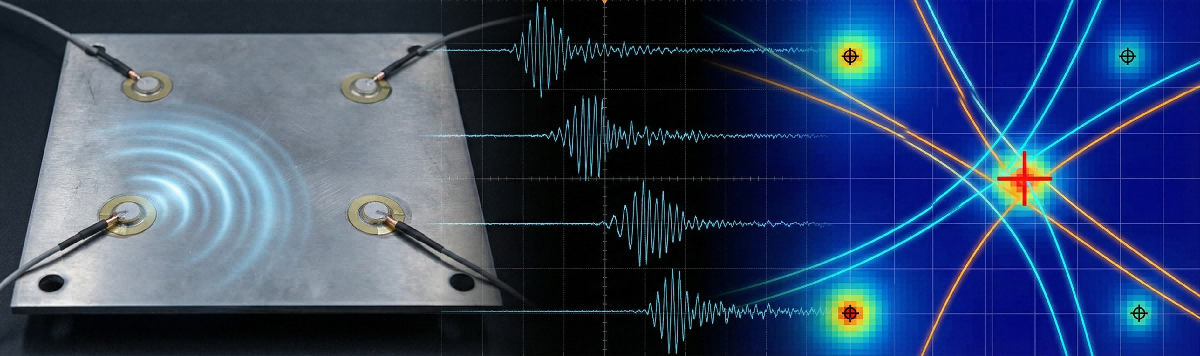

Acoustic Emission Source Localization: A Practical Guide to TDOA-Based Structural Health Monitoring

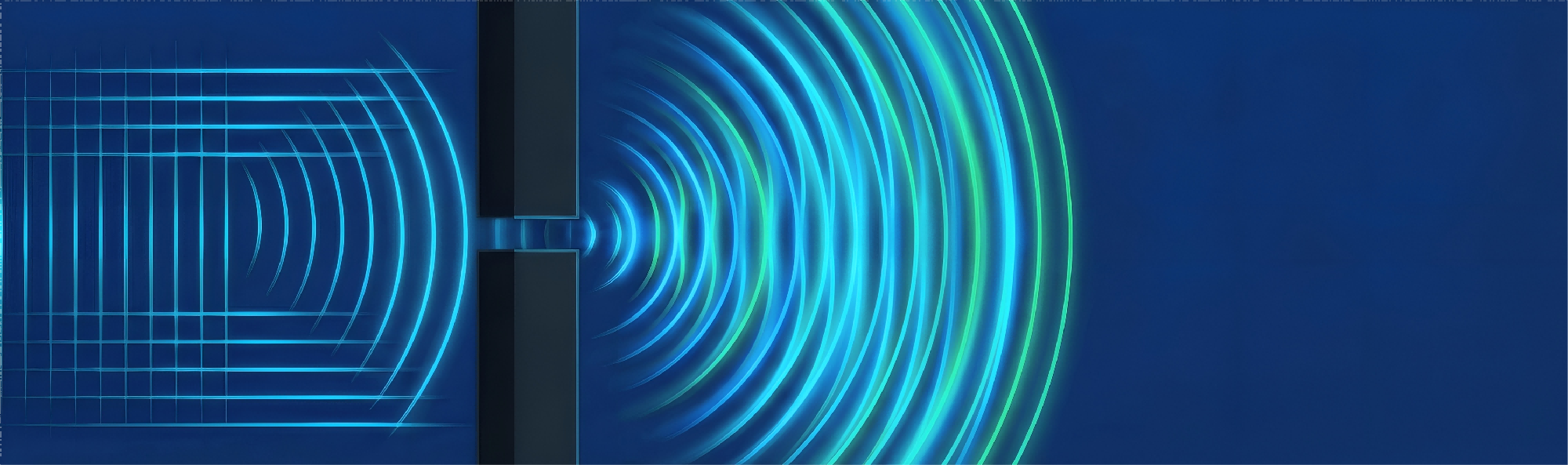

Learn how to locate damage in structures using sound waves. This article explains the physics of acoustic emission, the mathematics of TDOA localization, and provides complete MATLAB code—from theory to working implementation.